Table of Contents

- Overview: What is Aardvark?

- How Aardvark Works — From Threat Models to Fixes

- Private Beta, Safety Gates and Use Cases

- Real-World Results & Early Impact

- Market Impact & Industry Reaction

- Ethics, Safety and Red Teaming

- Future of AI Security: Opportunities and Risks

- Conclusion & What To Watch

Overview: What is Aardvark?

OpenAI has introduced Aardvark, an agentic AI designed to act as a software security researcher. Referred to in company posts and briefings as both OpenAI Aardvark AI and the OpenAI Aardvark security researcher, the agent is purpose-built to scan codebases, model threats, validate exploits in sandboxed environments and propose contextual fixes using the OpenAI Codex family of tools for code generation.

Unlike simple static scanners, Aardvark combines multi-step reasoning and tool-use — it reads repository structure, builds a mental model of application behavior, simulates attack vectors, and then attempts to patch issues. OpenAI Aardvark AI is rolling Aardvark out in a private beta to selected partners and security teams so they can validate the approach, refine safety controls and evaluate real-world efficacy before wider deployment.

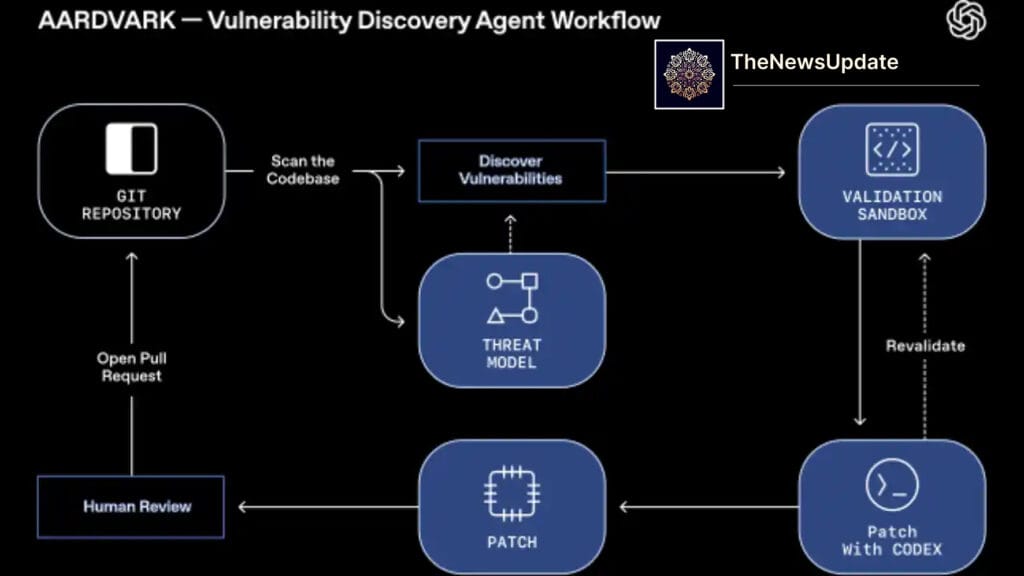

How Aardvark Works — From Threat Models to Fixes

At a high level, Aardvark performs three interlocking tasks: discovery, validation, and remediation. Discovery begins with a deep read of repository history, dependencies, and runtime artefacts to build a threat model — essentially a map of potential attack surfaces and security goals for the application.

Once suspicious code or risky patterns are identified, Aardvark tests them in an isolated sandbox. This dynamic testing step reduces false positives: the agent attempts to reproduce the issue, measures exploitability and quantifies impact. Finally, remediation uses an integration with code synthesis tools (OpenAI Aardvark AI Codex-style assistants) to propose a human-reviewable patch with contextual explanation, tests, and suggested commit messages.

Crucially, Aardvark is designed to assist human security engineers — not to bypass review. Proposed patches come with contextual notes (why the fix works, risk trade-offs, and test cases) so security teams can approve and deploy with confidence. That human-in-the-loop model is important both for operational safety and for legal / compliance requirements in many enterprises.

Private Beta, Safety Gates and Use Cases

OpenAI has invited select partners into Aardvark’s private beta. This staged rollout lets OpenAI Aardvark AI evaluate the agent’s performance across diverse codebases while iterating on safety controls, sandboxing fidelity and policy guardrails. Private beta also lets the company learn how Aardvark interacts with real-world CI/CD pipelines, dependency graphs, and legacy systems that often challenge automated tools.

Early use cases are clear: continuous security scanning in CI pipelines, prioritised vulnerability triage for large monorepos, and accelerated remediation for common classes of bugs (e.g., injection flaws, misconfigurations, insecure dependency usage). Larger organisations may deploy Aardvark as an always-on assistant that watches pull requests and flags risky changes in near real time.

Real-World Results & Early Impact

OpenAI reports that Aardvark has already identified and helped OpenAI Aardvark AI Agentic AI researcher

fix multiple vulnerabilities during internal use. Those early results suggest the agent can complement human teams by uncovering edge cases that static analysis or manual review might miss — especially complex logic bugs that require a contextual understanding of application behaviour.

Because Aardvark validates findings in a sandbox, it reduces the noise security teams typically face. By proving exploitability and attaching reproducible test cases along with patches, the agent reduces the time from discovery to remediation, which in security terms directly lowers mean time to mitigation (MTTM) — a key operational metric for any security organisation.

Market Impact & Industry Reaction

The launch of Aardvark is already reverberating across the cybersecurity and developer tools market. Security vendors that sell static analysis, runtime protection and managed services will likely accelerate integrations with agentic tooling or build competing agentic assistants. Startups focused on automated remediation now face pressure to match the breadth of reasoning and tool-use OpenAI demonstrated with Aardvark.

Enterprise buyers may respond in two ways. First, many security-minded organisations will pilot Aardvark as a way to scale scarce expert resources — larger codebases and complex microservice architectures make manual auditing costly. Second, some procurement teams will demand strong vendor assurances: explainability, audit trails, and evidence of safety testing will become procurement checklist items.

Industry reaction has been mixed but constructive. Security researchers welcome tools that reduce routine toil and catch subtle bugs, but many emphasise the need for rigorous red teaming and external review. Open source maintainers and compliance officers are likely to scrutinise how Aardvark handles third-party code, licensing questions, and whether generated patches preserve intended functionality.

Ethics, Safety and Red Teaming

Agentic systems that can read, test and write code raise obvious ethical and safety questions. If a tool can generate exploit-proving tests, what safeguards prevent those tests from being misused? OpenAI’s public materials indicate a focus on sandboxed testing, human review workflows, and access restrictions during private beta — but independent audits and community oversight will be essential.

Key safety levers include: strict access control to production systems, immutable audit logs for agent actions, rate limits on automated testing, and policies that prevent weaponisation (for example, disallowing generation of proof-of-concept code for certain high-risk vulnerabilities without human oversight). Transparency about these safeguards — and demonstrable evidence they work — will shape trust in Aardvark.

Future of AI Security: Opportunities and Risks

Aardvark is a striking example of how agentic AI can augment domain expertise. On the opportunity side, organisations can scale security coverage, speed up patch cycles, and reduce labour-intensive triage work. This could make secure development more accessible to smaller teams that cannot afford large security squads.

But risks remain. Automated patch proposals could occasionally introduce regressions or semantic changes if not properly validated. Over-reliance on an agentic researcher might cause skill attrition in security teams. And there is always the adversarial angle: sophisticated attackers could study agent behaviour to craft inputs that evade detection or coerce the agent into producing useful exploit components.

Mitigation strategies include firm human-in-the-loop requirements, dedicated regression test suites for generated patches, and continuous monitoring of agent performance. The combination of agentic automation plus robust engineering controls is the most promising path forward.

Conclusion & What To Watch

OpenAI’s Aardvark — marketed as both the OpenAI Aardvark AI and the OpenAI Aardvark security researcher — marks an important milestone in automated cybersecurity. Its ability to reason about code, validate exploitability and propose contextual fixes could materially change how teams secure software. The private beta phase will be critical: it will reveal both the practical value and the limitations of agentic security tooling.

Watch for three developments in the coming months: first, how Aardvark integrates with CI/CD and issue trackers; second, the robustness of OpenAI’s sandboxing and safety controls; and third, whether independent security audits confirm the agent’s effectiveness without introducing undue risk. If Aardvark delivers on its promise with transparent safeguards, it may become a standard tool in modern security stacks — but success depends on careful deployment, human oversight, and industry collaboration.

By TheNewsUpdate — Updated Oct 31, 2025